Join StarRocks Community on Slack

Connect on SlackChatGPT has dominated the public's attention over the last few months, creating entirely new industries, tools, and changes to how we work almost overnight. Millions of users across the globe have put ChatGPT's AI power to the test, and while there's no shortage of limitations with the software, some fairly amazing use cases are starting to show up.

One such use case is ChatGPT's ability to make database testing more efficient. Recently, contributors to the open source Linux project StarRocks put ChatGPT to the test against several popular automated testing tools to see what advantages, if any, the AI-powered chat platform could offer.

ChatGPT vs. Popular Database Testing Tools

The results of the StarRocks community's test were impressive. ChatGPT was able to hold its own when put up against other existing, and popular, automated testing tools including SQLsmith and SQLancer. The results of the tests were enlightening, and with ChatGPT's ability to learn and adapt, it seems likely that this is only the beginning of what ChatGPT is capable of for improving database testing.

ChatGPT vs. SQLsmith

SQLsmith is an automated database testing tool that generates random SQL queries based on a fixed table schema and executes the queries against the database to test its performance and robustness.

ChatGPT is well-positioned to replace the work of SQLsmith, and can generate random, complex SQL queries based on natural language inputs with ease. While it's too early to tell if ChatGPT is ready to completely eliminate the need for SQLsmith, it's certainly worth evaluating for anyone already using the tool.

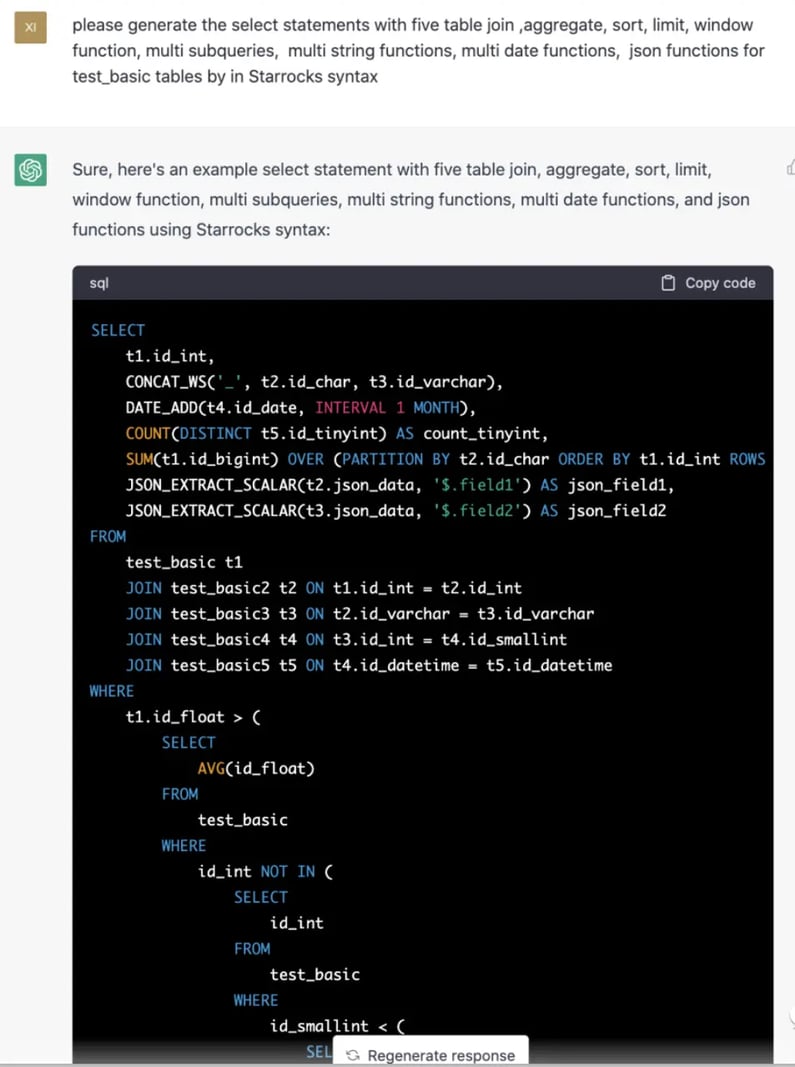

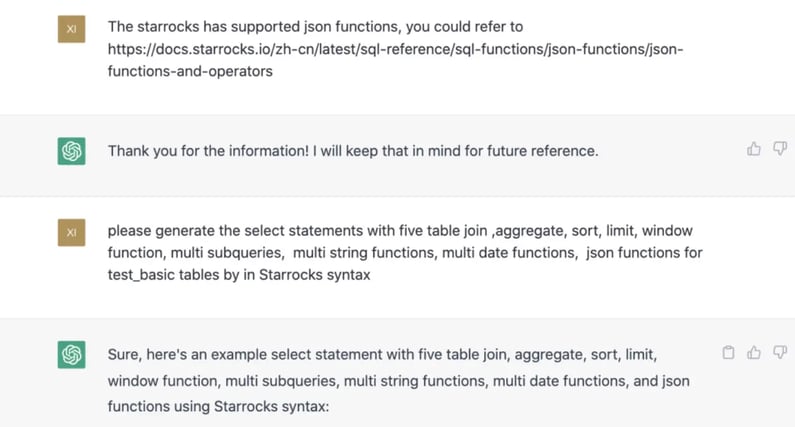

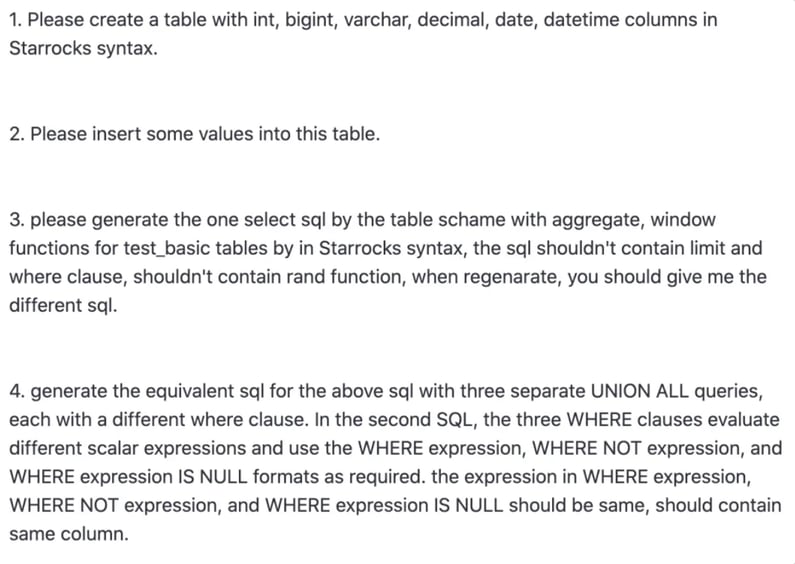

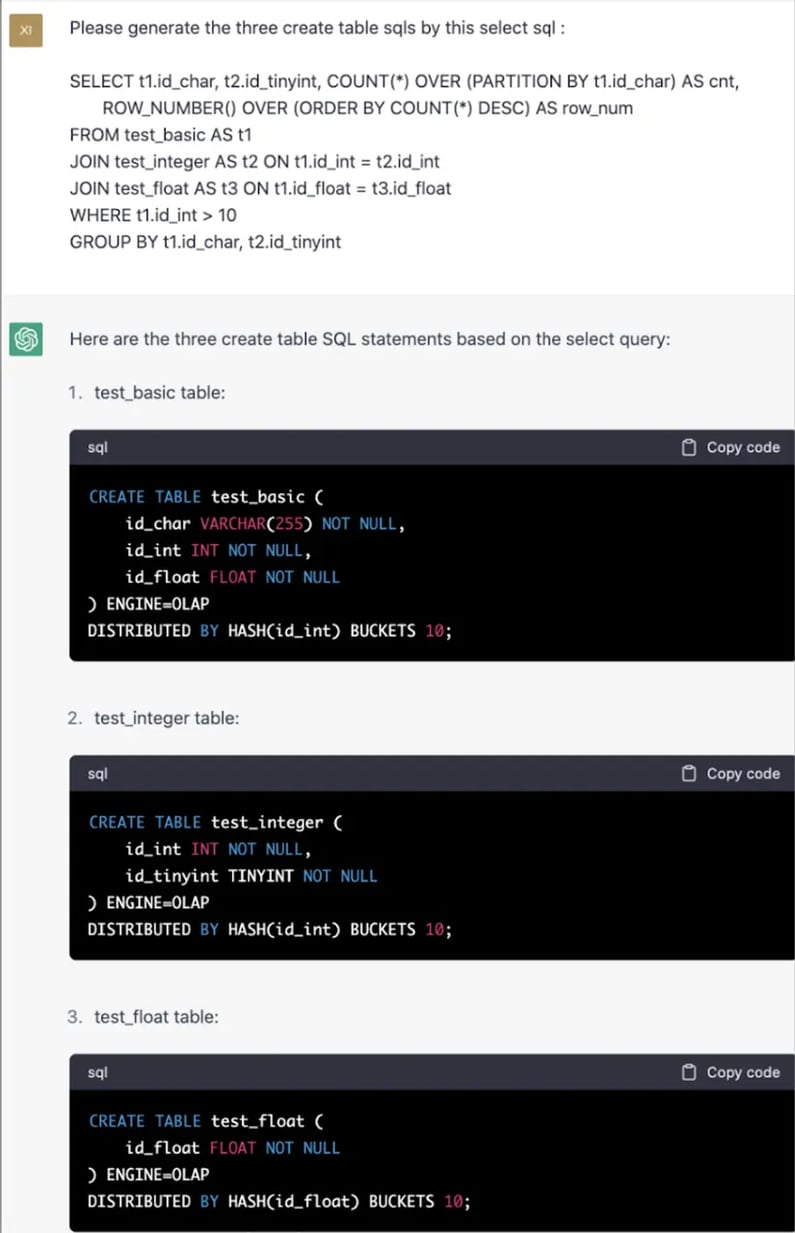

The following figure offers a great example of how ChatGPT constructs a complex SQL query based on a user's input:

Figure 1: ChatGPT creating a complex query.

ChatGPT: Great for Maintaining SQLsmith

While testing ChatGPT against SQLsmith, another valuable use case presented itself: ChatGPT could be great for maintaining automated database testing tools. Anyone who has used these tools recognizes this is a major pain point. Every time a new function, operator, or data type is added, engineers need to update the source code of their tools like SQLsmith and SQLancer. This is both tedious and time-consuming.

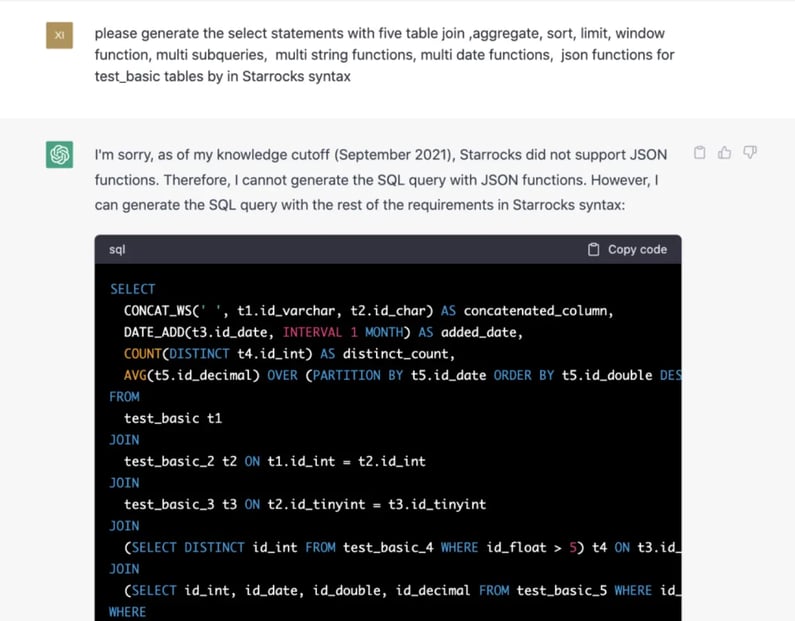

To validate whether ChatGPT could effectively take on this task, StarRocks developers asked ChatGPT to write a query using StarRocks JSON functions. Initially, ChatGPT replied that StarRocks didn't support JSON functions, but after feeding StarRocks' JSON docs to ChatGPT, ChatGPT was able to learn from the docs and successfully generates an SQL query with StarRocks JSON functions.

The following figures show this learning process in action:

Figure 2: In some cases, ChatGPT will need to be trained a bit before it's ready to go.

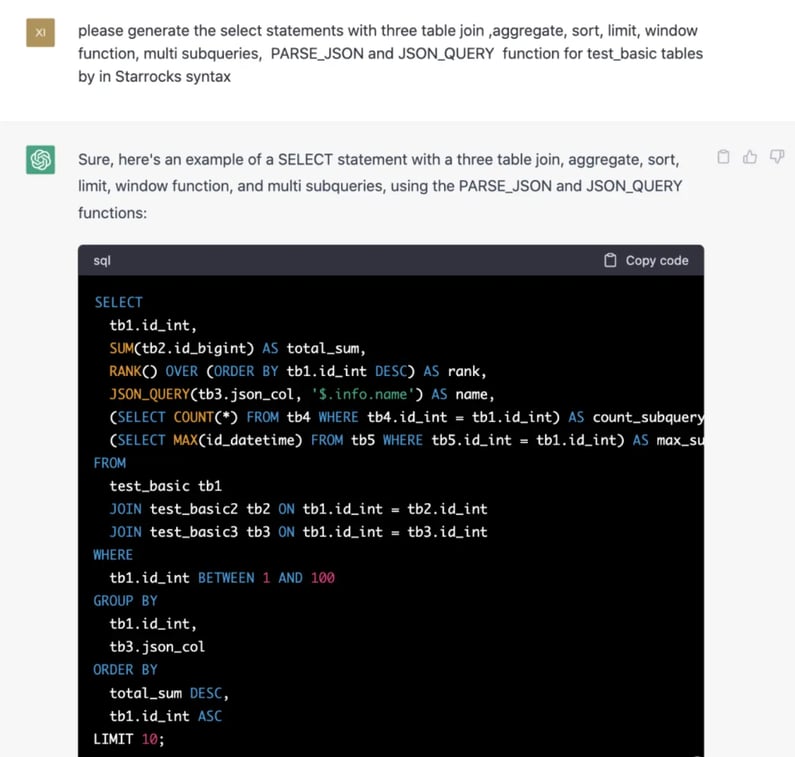

Figure 3: Training ChatGPT to understand StarRocks' JSON functions.

Figure: 4: ChatGPT generates a SQL query with StarRocks JSON functions.

It's clear that ChatGPT may not be ready to go right out of the box, but it demonstrates an amazing ability to quickly learn and adapt when the necessary learning materials are provided.

ChatGPT vs. SQLancer

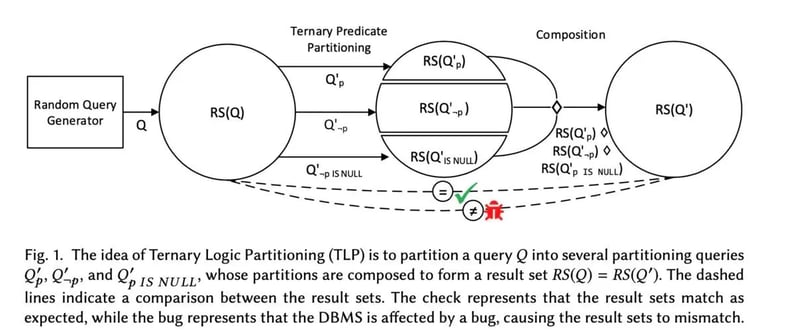

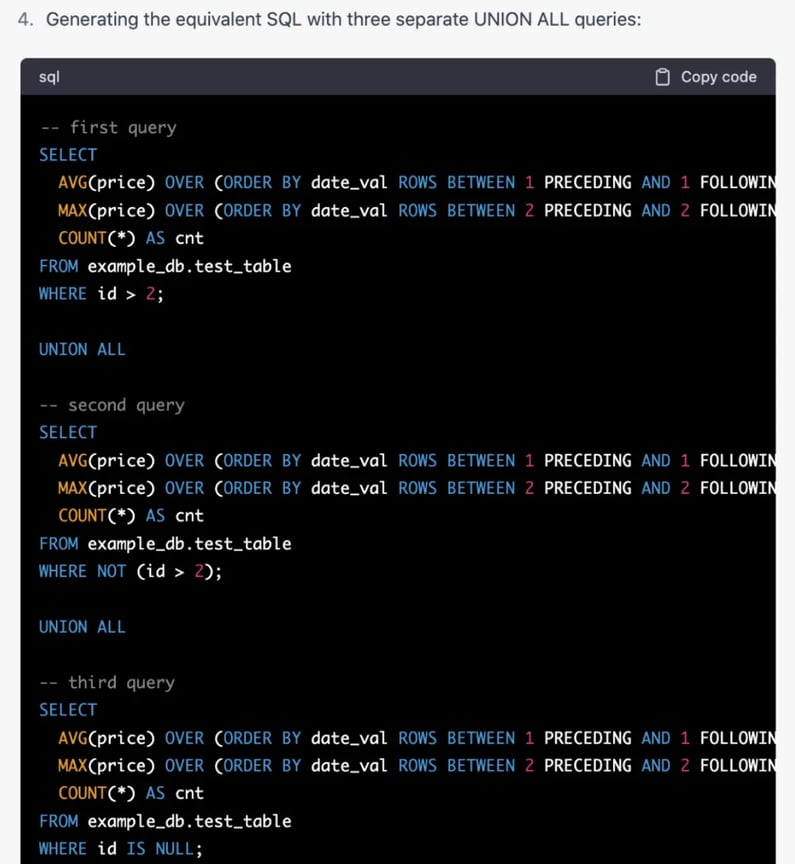

Seeing how well ChatGPT fared versus SQLsmith, it made sense to see how ChatGPT compared to SQLancer by testing against SQLancer's Ternary Logic Partitioning (TLP) principle.

Figure 5: Ternary Logic Partitioning (TLP).

The preceding figure is a schematic of the TLP principle, in which a random SQL query (Q) and a predicate (P) are provided, with three predicates (P, not P, and P is null) being applied to the query. A Union All is then performed on the results of the three queries. The checkmark in the diagram means the result is consistent with the original SQL query and the bug means the opposite.

Initially, this was not something ChatGPT could handle, but after nearly a dozen training sessions, ChatGPT developed the ability to generate an equivalent SQL based on the TLP principle.

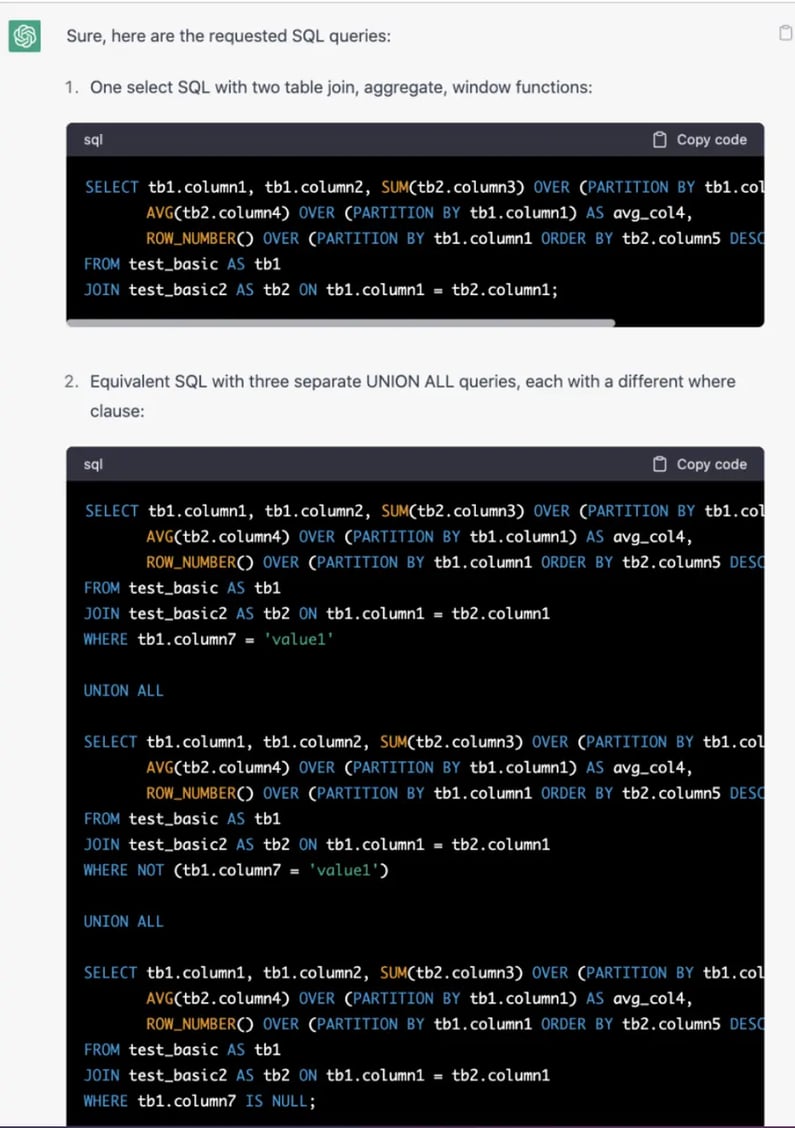

Figure 6: ChatGPT develops SQL based on TLP.

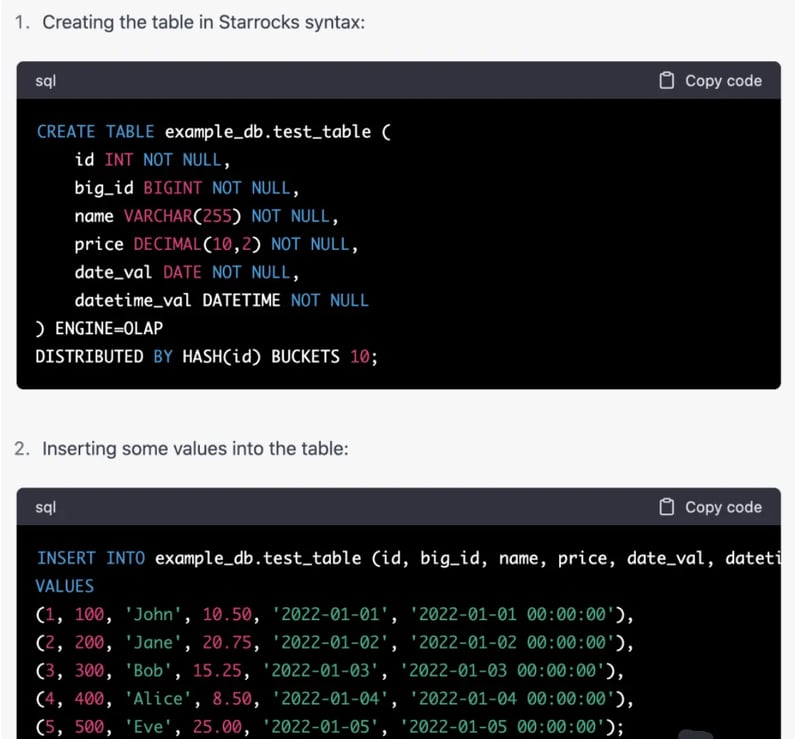

This, however, doesn't prove by itself that ChatGPT can act as a replacement to SQLancer. Since a complete SQLancer TLP test involves two additional steps: random table creation and data insertion, ChatGPT needs to hand this as well. Fortunately, this is very simple for ChatGPT. You can see the process below:

Figure 7: Providing instructions to ChatGPT.

Figure 8: ChatGPT generates the table in StarRocks syntax.

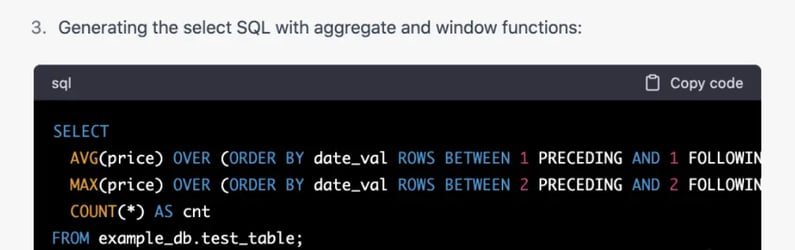

Figure 9: ChatGPT generates the select SQL with aggregate and window functions.

Figure 10: ChatGPT generates SQL with three separate UNION ALL queries.

ChatGPT for Query-Aware Table Creation Statements

By this point, the StarRocks community was able to confirm that ChatGPT could (to a reasonable degree):

-

Automate table creation and data loading.

-

Automatically generate test SQL.

-

Automatically generate a test oracle (confirm whether the SQL execution results are as expected).

And while these initial results from the ChatGPT vs. SQLancer experiments were exciting, they didn't completely answer the question as to whether or not ChatGPT can act as a replacement for these testing tools. In order to better answer this question, ChatGPT needs to be able to prove that it can handle four additional requirements. Those are:

-

Automatically generated SQL must be syntactically and semantically correct.

-

Automatically generated schema and datasets must be valid.

-

Automatically generated SQL code can cover a wide variety of scenarios.

-

There is an efficient way to verify that the SQL execution result is correct.

Query-Aware table creation statements are actually a great solution for addressing the requirements and challenges listed above. This made testing ChatGPT's ability to generate Query-Aware table creation statements a reliable way to evaluate ChatGPT's effectiveness as a testing tool.

Query-Aware means that table creation and data loading are oriented for SQL queries and guarantee that the test query can get the desired query results as defined in a given test case. It turns out that ChatGPT can handle this with ease.

Figure 11: Testing ChatGPT for Query-Aware table creation statements.

ChatGPT: A More Efficient Way To Test Databases

The StarRocks community's tests show that ChatGPT has great potential for improving automated testing efficiency, but it still has some work to do. ChatGPT still requires additional support to fully understand the basic logic of automated testing, such as the basic rewrite principles of SQLancer's TLP.

After dozens of hours of training, ChatGPT still does not fully understand the TLP principle, and the predicates in some of its rewritten SQL are wrong. All things considered, however, sufficient training may help ChatGPT fully understand this logic at some point in the near future.

This doesn't seem like such a high bar for ChatGPT to clear, as ChatGPT's biggest advantage is its amazing (and scary) ability to learn quickly. Even if it may be a while before ChatGPT is able to write test cases for new features more efficiently than humans, there's no doubt that ChatGPT will help humans improve the efficiency and accuracy of the work they're doing today.

CelerData