Join StarRocks Community on Slack

Connect on SlackThe future looks bright for open data lakehouses, but why is that? It's not just some marketing term, but a real, intelligent approach towards the future of data analytics. In this article, we'll take a look at the concept of open data lakehouses, their benefits, and we'll wrap things up with what this all means for Databricks, StarRocks, and CelerData users.

The Power of Openness: A Core Principle of Data Lakehouse Architectures

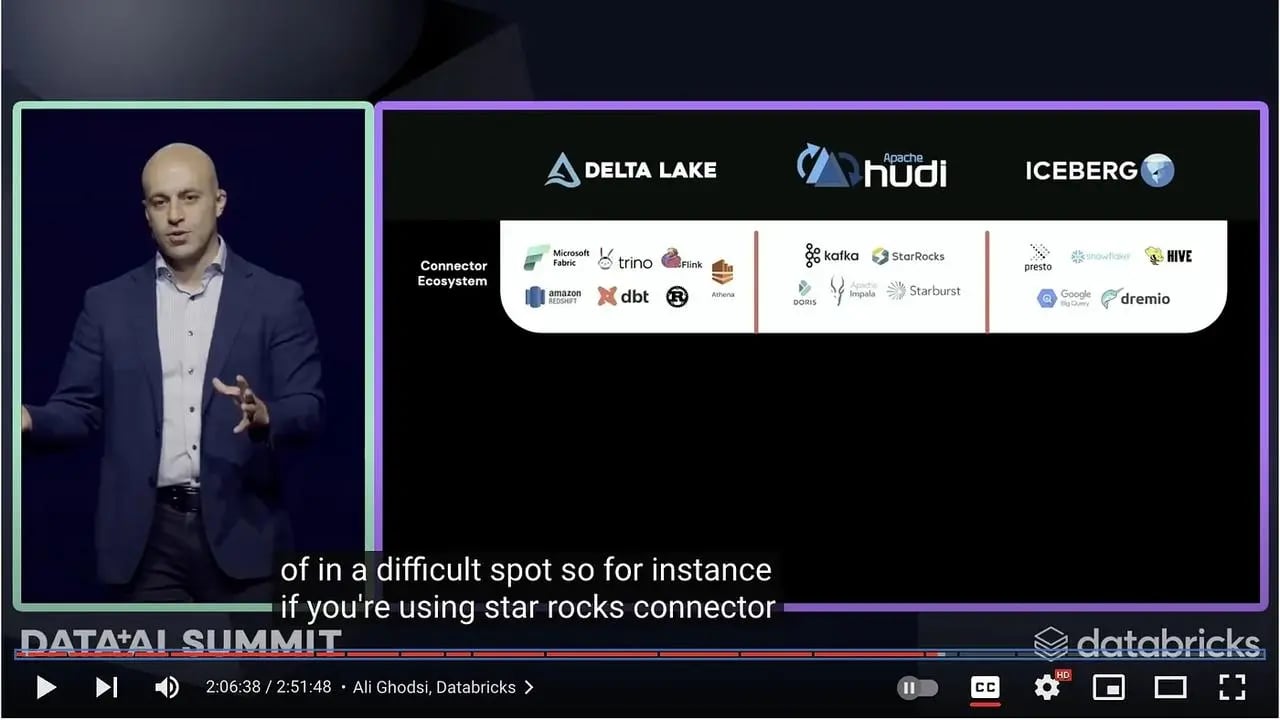

During the recent Data & AI Summit hosted by Databricks, StarRocks, the Linux Foundation's open-source real-time OLAP database, received a nod from Databricks' CEO Ali Ghodsi. The acknowledgment came as a result of StarRocks' successful integration into Databricks' open data lakehouse architecture, but it also recognizes the efforts made by the project to better support increased openness across the analytics industry.

Databricks CEO Ali Ghodsi calls out StarRocks during his keynote at Databricks Data + AI Summit

Databricks CEO Ali Ghodsi calls out StarRocks during his keynote at Databricks Data + AI Summit

Openness stands as the guiding principle for the development and implementation of data lakehouse architectures, and this openness confers a number of big advantages:

-

Interoperability: Open systems facilitate seamless integration amongst diverse tools and systems. This interoperability eradicates potential compatibility issues, enabling organizations to choose the tool best suited for their needs without compatibility concerns.

-

Avoid Vendor Lock- I n: Openness provides users the liberty to switch between various tools and providers, even between commercial and open source.

-

Innovation: Open systems often benefit from rapid innovation due to the broad developer community contributing to them, leading to faster introductions of cutting-edge technologies and features.

-

Transparency: Open systems, with their visible codebase and operational procedures, enhance trust, improving the overall visibility and security of the system.

The Evolution of Openness: Databricks Delta Lake 3.0 and StarRocks

Embracing the benefits of openness, Databricks introduced Delta Lake 3.0, setting a new standard for data storage and retrieval. One of its more notable features is the Delta Universal Format (UniForm). UniForm allows data stored in Delta to be interpreted as if it were Iceberg or Hudi. It effectively eliminates the need for users to manually convert or choose between formats, instead offering a unified data format for seamless integration between the three.

The development of Delta Lake 3.0 and UniForm was welcome news to the StarRocks community which has always been geared towards expanding the limits of database performance through openness. After all, focusing on performance has naturally meant building for flexibility, which is why StarRocks has created deep integrations with open data lake formats, including Apache Hive, Apache Hudi, Apache Iceberg and Delta Lake.

The introduction of Delta Lake UniForm creates an opportunity for StarRocks to perform sub-second analytics over multiple open data formats without data copying. This amalgamation expands the reach of sub-second analytics capabilities to a broader audience, making advanced data analytics more accessible than ever. StarRocks and Delta Lake compliment each other quite well in this new era of open data lake analytics.

The Future of Open Data Lakehouse with StarRocks and Delta Lake

As we look to the future, our commitment remains to making data processing simpler, faster, and more effective. The integration of StarRocks with Delta Lake begins a new chapter in the story of open data lakehouse architectures.

By combining capabilities, we look forward to continuing to serve as a reliable and high-performance query layer, strengthening the foundation of the data lakehouse model. Our joint journey with the Delta Lake Federation is poised to bring innovative solutions to analytics, demonstrating the power and potential of a truly open data lakehouse architecture.

And if you'd like to experience this openness for yourself, now's a great time. You can register for CelerData Cloud and claim a 30-day free trial right now. Sign up here.

Sida Shen